Rating

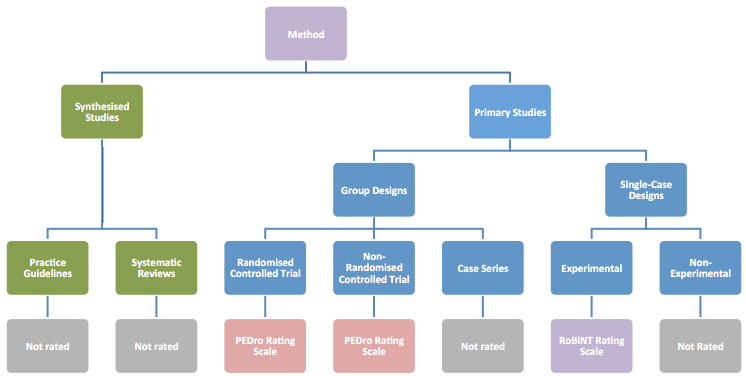

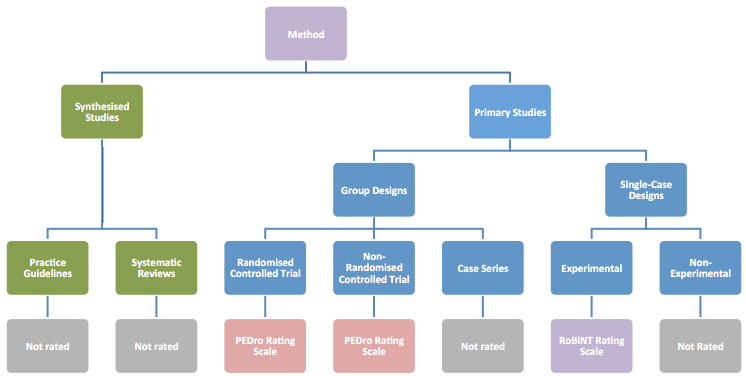

How the PEDro-P and RoBiNT Rating Scales relate to Research Designs

The published literature contains many critical appraisal scales that evaluate the scientific rigor (or methodological quality) of a research study. Different scales are available for different types of research designs. NeuroBITE uses two critical appraisal tools to evaluate primary studies: one developed to evaluate clinical trials using between-group methodology, the PEDro Scale (Maher et al., 2003); the other to evaluate single-case designs, the Risk of Bias in N-of-1 Trials (RoBiNT) Scale (Tate et al., 2013; 2015). NeuroBITE contains multiple types of research designs for synthesised studies (systematic reviews and practice guidelines) and primary studies (clinical trials – both randomised controlled trials, RCTs, and non-randomised trials; case series; and single-case designs, SCDs, – both experimental and non-experimental). Only the clinical trials and single-case experimental designs are formally evaluated for methodological quality. The figure below shows the relationship between the research designs on NeuroBITE and the critical appraisal tools we use.

When rating the research designs of primary studies, the NeuroBITE team occasionally comes across a report in which the way authors describe the design of their study does not correspond with the standard definition of a particular research design. In these cases, we classify reports on the database on the basis of standard definitions of research designs, rather than by author description, in order to provide consistency for NeuroBITE users.

The following definitions are used to classify the research design of the primary studies that we rate:

Randomised Controlled Trials

A randomised controlled trial (RCT) compares at least two treatments/interventions. One of these can be a no-treatment control or a wait-list control condition. Allocation to either intervention or control group is carried out by random mechanism, such as coin toss, random number table, or computer-generated random numbers, and the outcomes are compared.

Intended-to-be-randomised trials are also included in this category.

RCTs are rated using the

PEDro-P scale (PDF 93Kb). A maximum of 10 points on the 11-item scale can be obtained (item 1 is related to external validity and is not included in the total score).

Non-Randomised Controlled Trials

A non-randomised controlled trial (non-RCT) is similar to an RCT in that it compares at least two treatments/interventions (one of which can be a no-treatment control or a wait-list control condition) with the exception that participants have not been randomly allocated to groups.

The allocation might be carried out using a selection process, e.g. matching of participants according to sex, age, or other criteria. Other non-random methods include group allocation by date of birth, hospital record number, alternation, etc. which is also referred to as pseudo-randomisation. In other studies, the groups may occur naturally (e.g., comparing right vs left hemisphere lesions) and in these cases randomisation is not possible.

Non-RCTs are also rated using the PEDro-P scale. A maximum of 8 out of 10 points can be obtained for internal validity on the 11-item scale. Item 1 is related to external validity and is not included in the total score, items 2 and 3 (random and concealed allocation) are not applicable to these types of trials and therefore cannot receive points for these items.

Case Series

Case Series refers to a single group of participants who are exposed to one treatment or intervention where outcomes are measured in participants before and after exposure to the treatment or intervention. Because a case series does not contain a control group, the study has low internal validity and so NeuroBITE does not rate methodological quality of case series.

Single-Case Designs

Frequently used synonyms are single-subject designs, single participant designs, single-system designs, and N-of-1 or N=1 trials.

In single-case designs (SCDs), the basic principle is that the same (single) participant is used as his/her own control to evaluate the effectiveness of one or multiple interventions. SCDs are particularly suitable when investigating the effectiveness of treatments in heterogeneous populations or infrequently occurring conditions, where it can be difficult to achieve an adequately powered sample size. More information can be found about SCDs in the Useful References section.

There are different types of SCDs, the most common being withdrawal/reversal designs (A-B-A-B), or more complex variations of this (A-B-A-C-A-C), multiple-baseline designs, alternating-treatments designs, and changing criterion designs. A crucial factor in SCDs is whether the introduction and the withdrawal of the intervention are experimentally controlled. In some SCDs this is not done, and therefore we make a distinction between experimental and non-experimental SCDs (e.g. a pre-post design is non-experimental).

Single-case experimental designs are rated using the RoBiNT Scale The two subscales are scored for a maximum of 14 (Internal Validity subscale) and 16 (External Validity and Interpretation subscale) points, and hence the maximum total score is 30 points. Only experimental SCDs are rated on NeuroBITE. Because non-experimental SCDs generally have low internal validity, they are not rated on NeuroBITE.